Imagine you and your friends are trying to agree on pizza toppings in a group chat. If everyone shouts at once, nothing gets decided. If everyone stays silent, nothing changes either. The sweet spot feels like a chat where messages flow, people react, and a clear choice emerges. For years, some scientists thought the best “thinking” machines work the same way—right at the line between total order and total chaos. Mitchell, Hraber, and Crutchfield took a hard look at that idea and found the story is more complicated than the slogan.

Their work revisits two classics: Langton’s lambda (a knob that counts how many “1” outputs a rule produces) and Packard’s experiment evolving simple grid worlds—cellular automata—to do a job: decide whether a starting pattern has more 1s than 0s, then flip the entire grid to all 1s or all 0s accordingly. Think of it like a super-fast group vote that must end in a clear yes or no. The “edge of chaos” idea says the best rules should live near special lambda values where behavior shifts from tidy to wild. Packard reported clustering near those “critical” zones. The new study explains lambda, the phase-style behavior it was meant to summarize, and how Packard set up his genetic algorithm to test rule generation after generation.

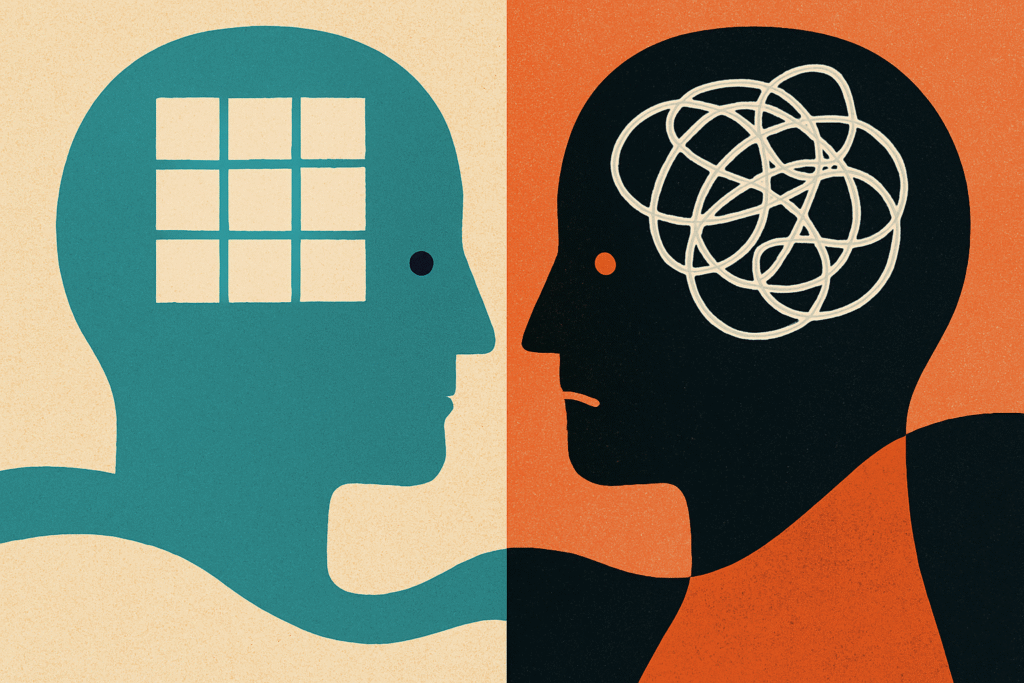

Here’s the twist. A well-known rule (the GKL rule) often solves the task by sending out little “signals” that spread until the whole grid agrees—like ripples that settle a debate. But it only does so approximately, and its lambda is smack in the middle at 1/2, not near the supposed critical edges. The authors also show why good rules for this job naturally hover near 1/2: the task is perfectly balanced between 0 and 1, so drifting far from 1/2 makes mistakes more likely. In their own evolution runs, populations were pulled toward 1/2 by simple combinatorics (“drift”) and then split to either side as new strategies emerged—a symmetry breaking that shaped progress. That’s a big reason their results didn’t back the “edge” claim.

Why should you care? Because it serves as a reminder to be cautious with catchy rules of thumb. The authors demonstrate that what appears to be recipe (“always operate at the edge”) may actually reflect the task at hand and the way success is measured may be universal. In everyday life, that means: don’t assume the most exciting, high-noise setting—more apps, more tabs, more chats—is where you think best. Sometimes the winning setup is balanced, not extreme. It also means symmetry and biases matter: if your decision rule quietly favors one side, you may keep landing on the wrong choice. Test ideas against varied cases, not just the ones that flatter them. That’s the deeper lesson of their study: useful computation grows from clear goals, fair tests, and smart strategies—not from chasing an edgy vibe.

Reference:

Mitchell, M., Hraber, P. T., & Crutchfleld, J. P. (1993). Revisiting the Edge of Chaos: Evolving Cellular Automata to Perform Computations. Complex Systems, 7(2), 89–130. https://doi.org/10.48550/arXiv.adap-org/9303003.

Privacy Notice & Disclaimer:

This blog provides simplified educational science content, created with the assistance of both humans and AI. It may omit technical details, is provided “as is,” and does not collect personal data beyond basic anonymous analytics. For full details, please see our Privacy Notice and Disclaimer. Read About This Blog & Attribution Note for AI-Generated Content to know more about this blog project.